Consumer Goods

Data Lake Management Portal Drives Digital Transformation in Global Chemical & Consumer Goods

Digital Transformation: RUBICON Develops a Secure Cloud Native Platform for a Global Chemical & Consumer Goods Company

Overview

The global Chemical & Consumer Goods Company reached out to RUBICON to help them digitize and automate their business operations. As a company positioned in a number of markets in the world, ranging from beauty care to laundry and home care, with over 50,000 employees, the client was ready to put an end to their traditional operations. The company's employees and partners needed to easily store, access, and share substantial amounts of data on one platform. The solution we developed for them was a secure web and cloud-native platform, "Data Lake Management Portal," that enabled users to access and exchange relevant data located in Azure cloud (from Data Lake, Data Warehouse, and other sources) in a secure and well-managed approach. RUBICON's goal was to develop a generic and highly modular web-based portal that serves at least 1000 peak users in parallel and to serve more than 10 million files with 30,000 new files per day.

Challenges

While developing the portal, our team had to overcome the following challenges:

Developing a cloud web-based explorer that enables users quick and easy; access to data and the ability to share and store data in Data Lake

Implementing cloud scalable architecture to serve at least 1000 peak users in parallel and to serve more than 10 million files with 30,000 new files per day;

Creating a Single Sign-On (SSO) authentication and authorization using Azure Active Directory and integration with other services and APIs within the organization;

Integrating with the organization’s big data pipelines, APIs, and other services

Designing an intuitive and modern web application;

Developing a Single-page application (SPA) for a fast and smooth user experience.

Solutions

RUBICON's team worked together with the client to understand their demands by organizing a Lean Inception Workshop. During the four-day workshop, both teams collaborated to define the product vision and goals, explore features and user journeys, and review the technical/business/UX components. By the end of the workshop, we had released version 01 ready for development with a set of clear guidelines and features.

After creating the product roadmap and introducing the idea to the RUBICON team, the workload was split up into two-week sprints, and the team worked in Scrum, following the Agile framework. Once release version 01 was complete, the beta version was launched, and the client's team was given access to the portal so they could incorporate it into their work routine.

The main goal was to create a cloud-native solution to support the client’s requested features and requirements. The software requirements included: being able to run on Azure cloud, easy long-term maintenance, stability, on-demand scalability, and using an existing Azure Active Directory. We used Azure Platform as a Service (PaaS) offering lower effort to a minimum so the client wouldn’t have to maintain and manage resources such as storage, servers, applications, services, etc.

User Testing

After launching release version 01, RUBICON organized a set of user testing interviews. Taking our previous user testing experience into consideration, we subscribe to the theory that you only need 5 users in order to identify approximately 80% of all usability problems. You can read more about it here. The theory is that as you add more and more users, you learn less and less because you will keep seeing the same things again and again.

We performed user interviews with our candidates, one at a time. The interviews were done remotely, with the following team:

Interviewer - RUBICON

User testing candidate - client

Silent observers - the client and RUBICON

The interviewer would guide the candidate through the release version 01 with a series of essential questions (non-guiding, open-ended questions, which encourage the candidate to think critically). The candidate who had never seen the product before logged into the system, went through the product and voiced their concerns and suggestions out loud. Users came from different departments, so we had input from all of the personas we defined at the beginning of the project.

As a result, we had a list of improvements and additional features that needed to be developed in order for the product to deliver more value to all users. We also knew that we were on the right path, as 80% of our candidates asked for features that were in development at the time.

After analyzing the feedback, we hosted another Lean Inception Workshop for release version 02, this time the workshop was organized remotely due to the COVID-19 pandemic. The purpose of this workshop was to extend the features and define the second project. We took the user testing results into consideration and defined our goals and features for the release of version 02. With all of those results combined, our team started working on the second phase of development.

Once we implement the features for the release version 02, we will have a second wave of user testing, defining the improvements we need to make, organizing a new workshop, and starting the process all over again.

Data Lake Management Portal

Data Lake Management Portal is a unified and user-friendly web portal and service designed and developed for an unlimited number of users to have quick and easy access to data and sharing in the Data Lake. The portal enables users to browse, download, upload, request, share, and view available data within the company.

The portal contains the following features:

Functional Features

Users can connect to the portal using Azure AD Single sign-on

Search available data assets in the Data Lake

Support concept of applications and workspaces that are needed for specific user data use cases

Request data from data owners

Approve/reject requests for data access

Web-based file explorer that enables users to upload, download, and create files and folders from the underlying Data lake

Expose portal features through API and secure connection via Azure AD service principal

Various event notifications: sending emails and app-to-user notifications

Add collaborators to the application workspace

Non-functional requirements

REST API documentation

Daily backups

System Security: Allowing access only to computer users, users can connect to the portal using Azure AD, other systems and APIs can connect to the portal via service principal

Automation with CI/CD pipelines, testing, deployment, and infrastructure

Testability - unit and integration tests

Usability - simple, clean, and, usable UX/UI

Scalability - on-demand scalability

Audit logs - for all user access and actions

Code quality standards - clean code, static code analysis

The Cloud Solution

The client requested a scalable cloud solution with the following requirements:

To be run on Azure cloud

Easy long-term maintenance

Stability

On-demand scalability

To use an existing Azure Active Directory

The goal was to create a cloud-native solution to support the requested features and requirements. The focus was to use Azure Platform as a Service (PaaS) offering lower effort to a minimum so the client wouldn’t have to maintain and manage resources such as storage, servers, applications, services, etc.

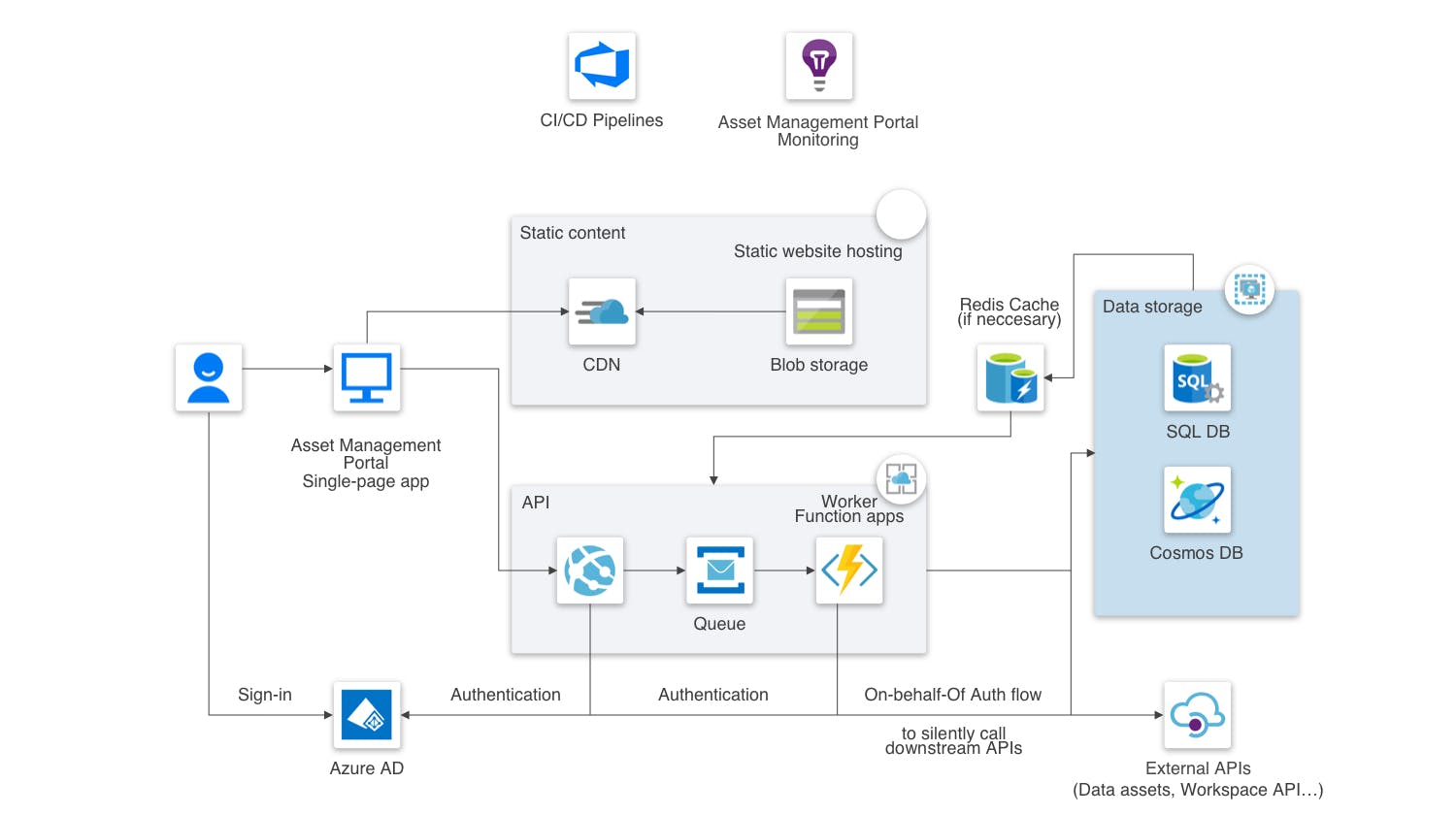

Following industry-proven practices for scalable and performant cloud solutions, RUBICON implemented the solution shown in the diagram below:

This architecture shows a front-end single-page application with JavaScript accessing backend REST APIs. The solution servers static content from Azure Blob Storage and implements back-end APIs with Azure App Services and Azure Functions. Users sign into the web application by using their Azure AD credentials and Azure AD returns an access token, which the application uses to authenticate API requests. Furthermore, back-end APIs authenticate and invoke other company services and APIs by implementing the OAuth 2.0 On-Behalf-Of authentication flow.

Architecture

The architecture includes the following components:

Blob Storage. Static web content, such as HTML, CSS, and JavaScript files, are stored in Azure Blob Storage and served to clients by using static website hosting. All dynamic interactions happen through JavaScript code making calls to the back-end APIs.

CDN. We used CDN to cache content for lower latency and faster delivery of content.

Azure AD. Users sign into the Data Lake Management Portal using their Azure AD credentials. Azure AD returns an access token for the backend API, which the Data Lake Management Portal uses to authenticate API requests.

App Service. Use App Service to build and host RESTful API. The backend API is responsible for calling downstream client’s APIs and uses OAuth 2.0 On-Behalf-Of flow to propagate delegated user identity and permissions. App service allows autoscale and high availability without having to manage infrastructure.

Queue. Use queues for background tasks, events, and messages by putting them onto an Azure Queue storage queue. The message triggers a function app for example.

Function App. Use the Function app to run background tasks, events, or messages being placed in the queue.

Data Storage. Use Azure SQL Database for relational data. For on-relational data, we are using Cosmos DB. For caching requirements use Azure Cache for Redis.

Azure DevOps Pipelines. They are used for continuous integration (CI) and continuous delivery (CD) that builds, tests, and deploys the solution.

Monitoring. Use readily available services for Azure PaaS offering. These include Application Insights, Azure Monitor, and Log Analytics.

DevOps

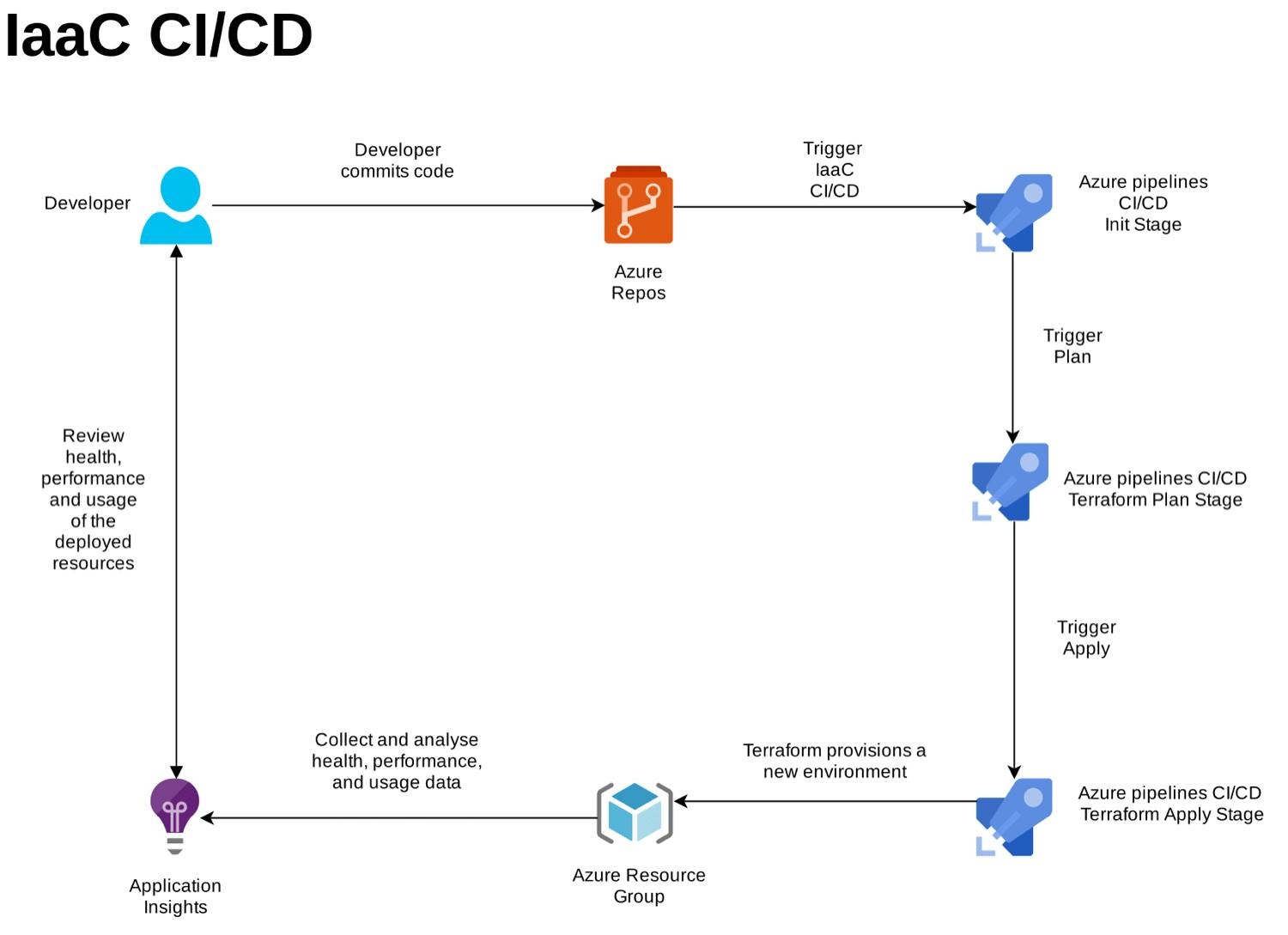

For provisioning Azure resources we used Terraform and Terragrunt. Using these templates makes it easier to automate deployments and provision different environments in minutes, for example, to replicate load testing environments only when needed which saves costs.

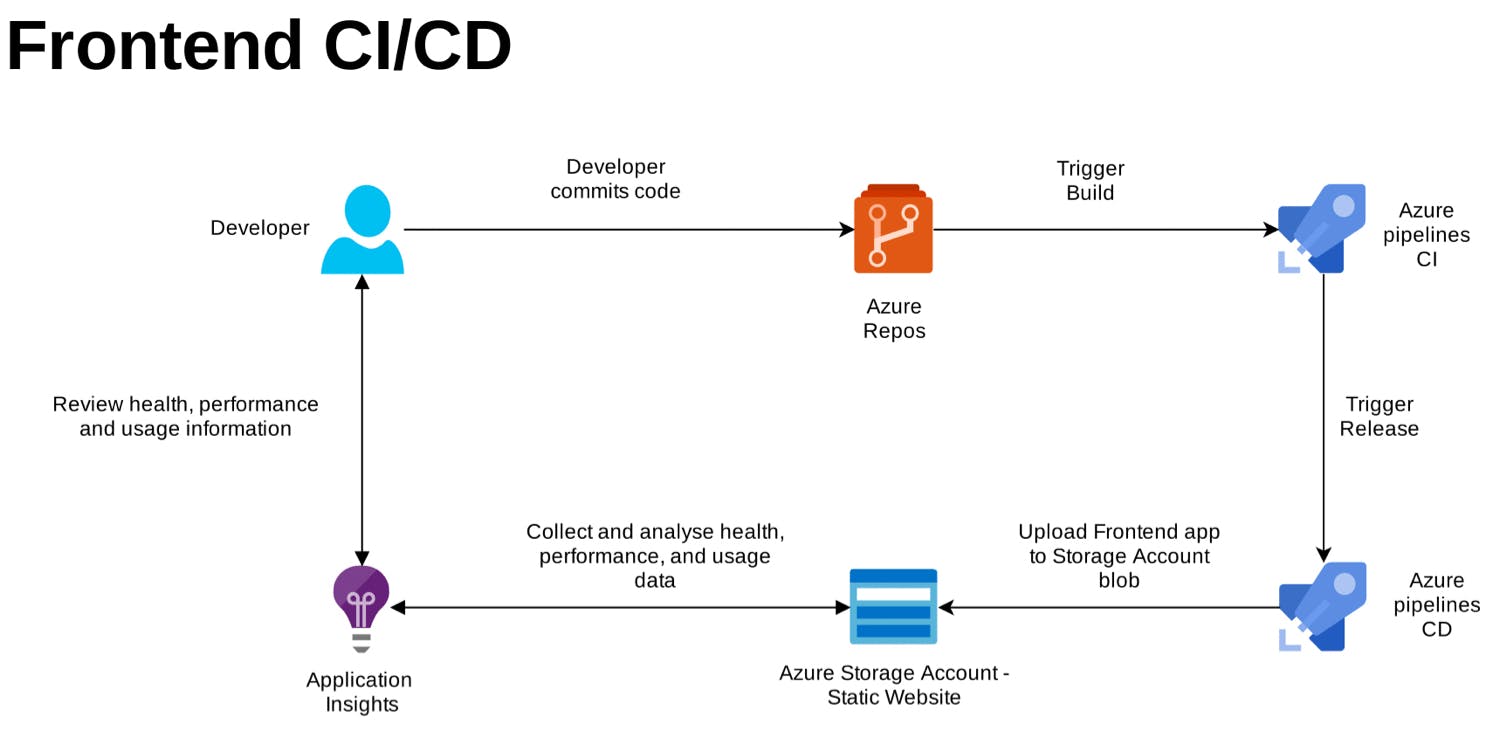

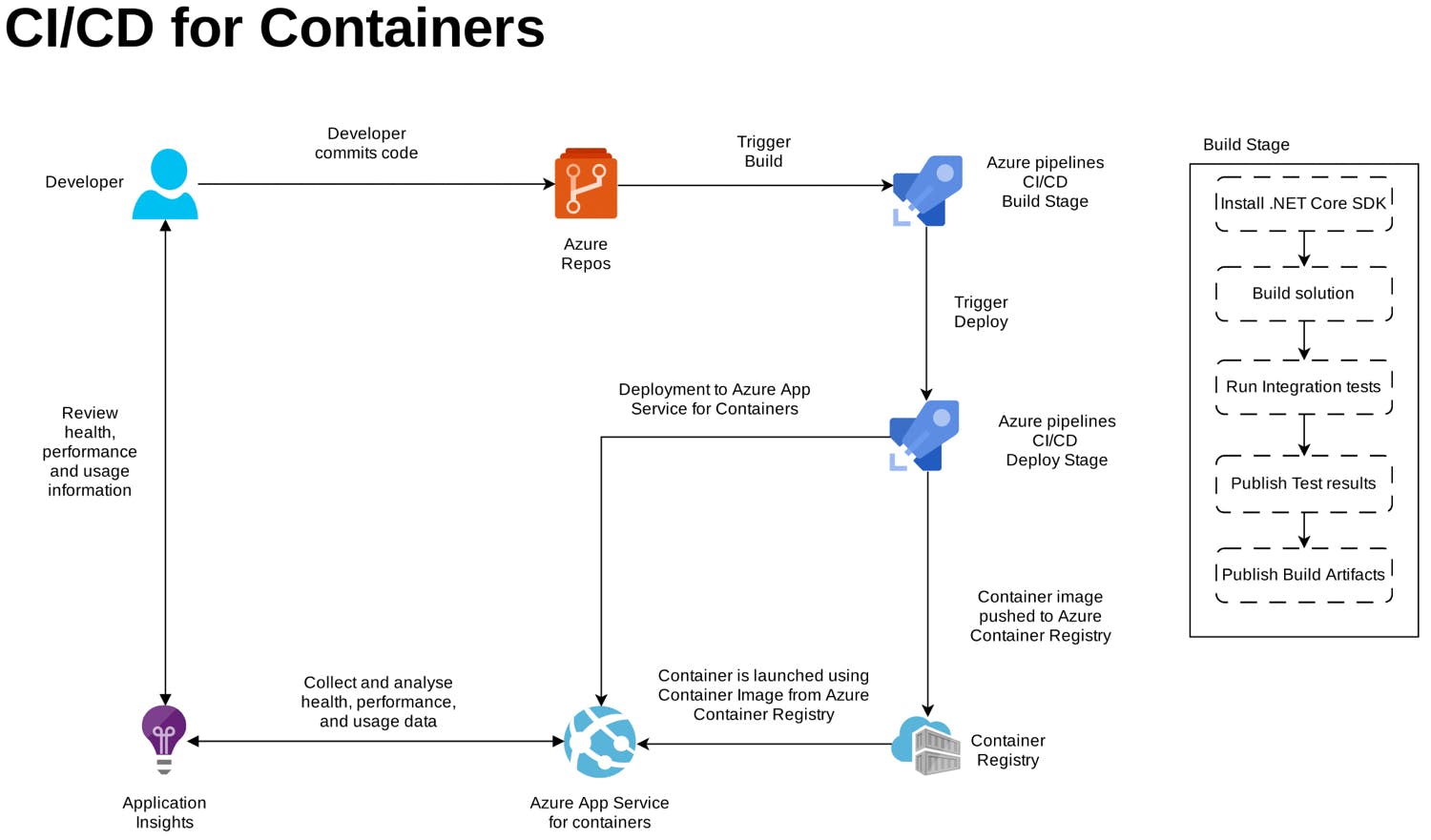

Our DevOps team implemented continuous integration (CI) and continuous deployment (CD) pipelines using Azure DevOps Pipelines services that automatically build, test, and deploy every source code change. Azure DevOps provides Repos for source code control, Pipelines for CI/CD, Artifacts to host build artifacts, and Boards for developer collaboration and coordination. With these processes in place, we focus on the development of the applications rather than the management of the supporting infrastructure.

Frontend CI is programmed to trigger on every git push from feat, fix, chore branches. The Build Stage includes jobs and tasks that clone the repo, install npm tools, build the solution, run unit tests, and then package and publish artifacts to Azure Artifacts. If the source branch is being merged in, Build Pipeline triggers the Release Pipeline.

Backend CI/CD for Containers is one pipeline with the Build and Deploy stage. Build Stage installs .Net Core SDK, builds the solution, using Key Vault secrets runs Integration tests, and publishes test results and Build Artifacts. The deployment stage gets skipped unless the source branch is develop or master. The deploy branch contains two tasks: building and pushing the Docker image to the Container Registry and a task to deploy the container image to Azure App Service for containers.

The initialization stage of the IaaC pipeline initializes a resource group with a storage account and a container that will be used as a remote state. In the Plan Stage, we are performing the validation and creating the plan for the infrastructure, but before we can do that we first need to download Terragrunt and the secrets file located in Azure DevOps. Finally, in the Apply Stage, after the manual approval check, we are applying/deploying our changes to the infrastructure.

Results

After only 7 months of development, we managed to:

Develop a secure web portal with a simple and modern UX/UI design

Easily enable users to read and write files from an underlying data lake

Successfully help the client say goodbye to their traditional business ways and transition into using their new software

Make the client’s performance more efficient and automated

Technology stack

C#, ASP.NET Core 3.1, Clean Architecture, CQRS, MediatR, EntityFramework Core, Dapper, xUnit, Moq, FluentAssertions, Microsoft Azure Cloud (SQL Database, CosmosDB, Queue Storage, Table Storage, Blob Storage, Functions, Active Directory, Microsoft identity platform, App Service, Container Registry, Application Insights, Log Analytics), TypeScript, React, Jest, Enyzme, Git,Azure DevOps: Boards, Repos, Pipelines, Docker, Terraform, Terragrunt